My students could tell you what is wrong with that ChatGPT paper

Everything we interact with – from the food we eat, to the technology we use – changes our brains in one way or another. It's an entirely separate question to know whether these changes are meaningful.

I take a lot of inspiration from The Real World when I design my classes.

On the first day of my neurobiology course, I show my students a 2022 headline that reads, "It's true – life may well flash before your eyes when you die." It's a lovely idea but contrary to the headline, it's not true. More precisely, we don't currently have the evidence.

Later on we discuss methods for recording activity from human brains, one of them being electroencephalography, or EEG for short. I spend more and more time explaining EEG and its limitations because of its rising popularity. Unfortunately, it's incredibly easy to stick an EEG cap on a bunch of people and generate lots of of squiggly brain traces and heatmaps on brains. It's much, much harder to do it well, or to make any theoretical sense of it.

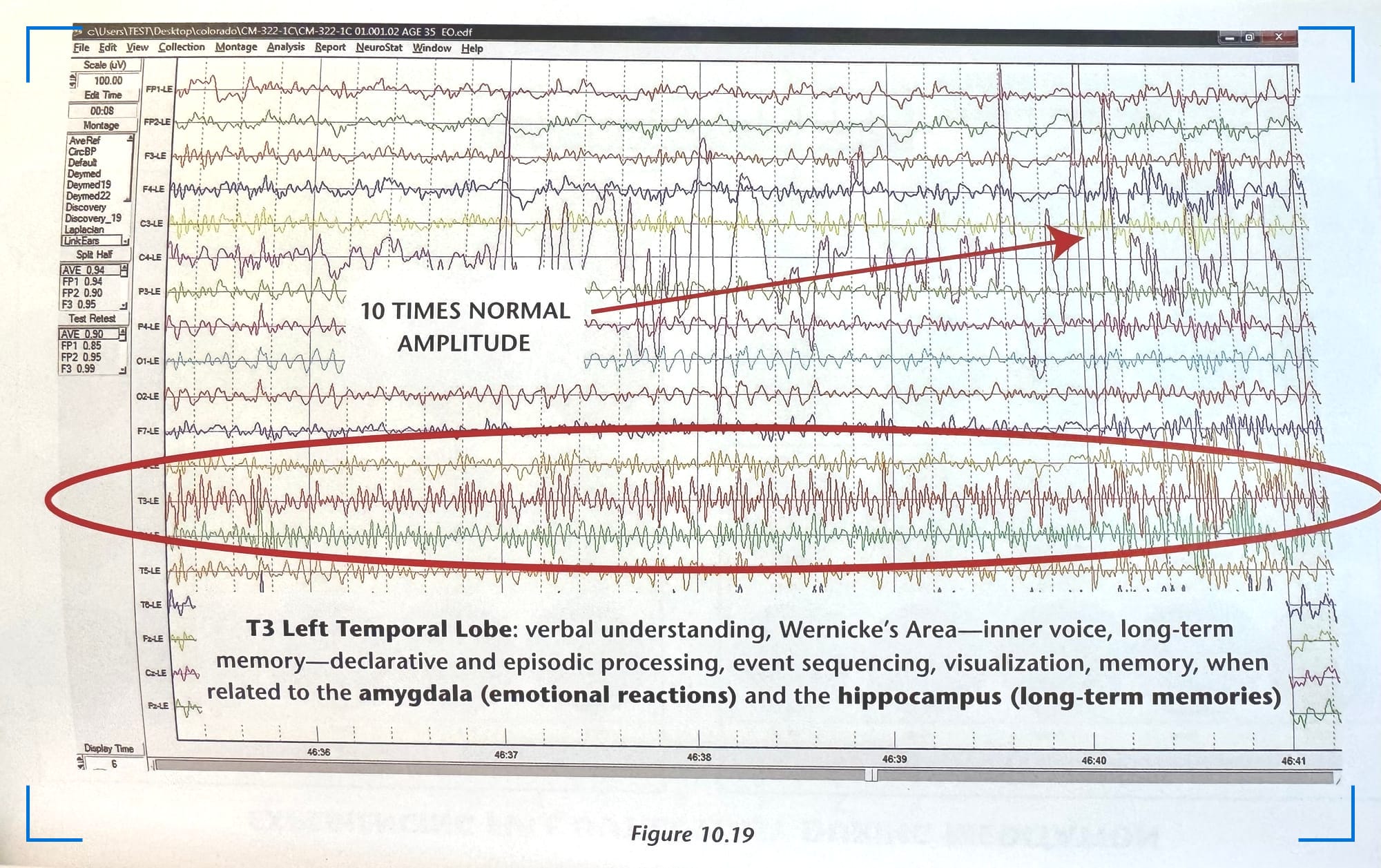

The misuse of EEG (or brain data, for that matter) isn't new. In 2014, chiropractor-turned-quantum-physics-enthusiast Joe Dispenza released a book called You Are The Placebo. In a bonus color insert in the middle of the book, Dispenza includes screenshots from EEG recordings during meditation. In one recording, he annotates a trace as "10 TIMES NORMAL AMPLITUDE" and argues that this means a participant's brain is "processing ten times the normal amounts of energy." Reader, it is not.

Any person who has used EEG – including the students in my class – could look at that recording and tell you that it's an improperly connected electrode.

When students leave my class, I expect that they can evaluate headlines as well as EEG data itself to determine whether various claims are supported. So yeah, the MIT research artifact that I've started calling the preprint that will not die is going to make an appearance as well. Yes, you know the one. It's the one that anyone who wants to make a case against AI will cite to show you that AI lights your brain on fire, or turns it to rot, or makes you passionately defend Nickleback as an artist.

As discussed on our podcast, Change, Technically, I firmly believe we need to study the impact of AI use on our brains, behavior, and environment. As an educator, I worry about students using AI to short-circuit their own learning. As a musician and an artist, I care about protecting human-driven creativity. As an inhabitant of this planet, I worry about how much energy AI uses.

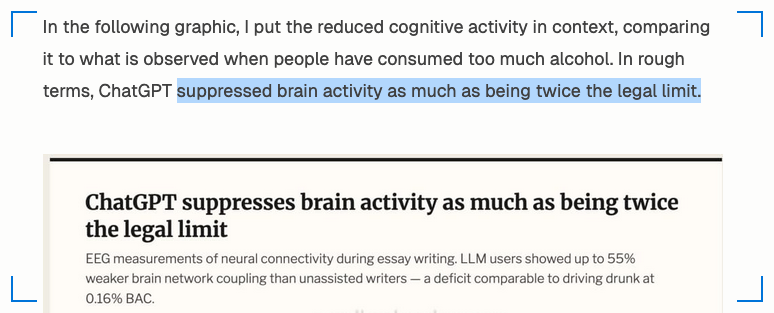

But as a neuroscientist presented with many poorly construed conclusions and wildly-exaggerated headlines derived from this paper, I need to tell you: the file named "Paper draft.pdf" doesn't contain any evidence that using chatGPT suppresses brain activity as much as being twice the legal alcohol limit, as at least one fearmongering post would have you think.

So why, you ask, am I not convinced by the data in that preprint?

Let's start with some basics.

At the risk of unveiling my exams for all of my future students, I want to tell you about a question I've started asking. It goes like this:

"The methods of an EEG paper read, 'We applied a low pass filter at 0.1 Hz and high pass filter at 100 Hz, and notch filter at 60 Hz.' If the researchers were trying to measure brain activity while removing electrical noise, which of the filter values seem incorrect?"

a. The low pass filter

b. The high pass filter

c. The notch filter

d. Both the high and low pass filters

e. All of the filters

This sentence is directly taken from the preprint. The answer is d. And yes, we're all allowed our typos and yes, I have my own mnemonics for remembering which is high- and low-pass. But had they actually filtered their data in this way, they would have filtered out all of the frequencies we care about, from 0.1 to 100 Hertz (Hz).

Clearly (hopefully?) they didn't, so let's move on.

If you took my class, you'd learn that there are a few ways we tend to analyze EEG data. The most common way breaks the signal down into different frequencies, in the same way a music producer analyzes how much bass or treble is in their track. In my class, students close their eyes and watch as their alpha activity (at about 10 Hz) grows. I chose this experiment because you can literally see the changes in the recording with your own eyes.

Very few changes in EEG activity are this visible. In the 2022 paper that recorded EEG just before and during someone's death by cardiac arrest (and was hyped by the headline above), there was a huge burst in high-frequency gamma activity before death. That too was pretty visible.

Further, very few changes in EEG are as interpretable as the experiment in my class. Closing your eyes increases alpha activity in your occipital lobe because you've removed visual input, which decorrelates brain activity. If students manage to relax with their eyes closed (and especially if they're experienced meditators), they may also see an added bump in alpha, an indicator of a brain at rest.

As for the dying person, we don't have a theory for why gamma specifically would increase – yes, gamma activity is involved in memory consolidation, but it's also involved in approximately a bazillion other things. Papers that use EEG well begin with a theoretical framework for why they would expect to see particular changes. When my students propose EEG work for their final projects, they have to tell me what they expect to change, and why.

The preprint at hand similarly focuses on particular frequency bands, but they take this one somewhat unusual step further. They look for directed connectivity using the "Directed Transfer Function" approach, which is derived from algorithms originally designed for predicting causality in economics.

Broadly speaking, neuroscientists care about how things are connected because it constrains the number of things a brain can do. There are really two kinds of connectivity: structural and functional. Structural connectivity is the literal axons between your brain regions, the string between two cans. Functional connectivity is an estimate of structure, but it's not the same thing. EEG gives us a window into how brain regions are functionally connected, or how they tend to work together in time.

The preprint asks whether particular electrodes on the EEG cap are "connected" differently when participants are using an LLM alongside their brains. It's hard for me to say how well this analysis was done – there aren't enough details in the manuscript to know exactly how they implemented their analysis and any peer reviewer would have undoubtedly pointed this out. Even better, in the spirit of open science, the authors would have included their analysis code.

Nonetheless, the preprint reports a whole laundry list of findings, which I won't exhaustively go through here. Some of these findings could very well stand up to replication in another study (something we rely on especially when we're making big claims about brain-destroying forces) but my suspicion is that most of them are quite fragile.

For now, let's take their findings at face value. The first finding is that the brain-only group shows more connectivity between particular nodes, within the alpha band. The question then becomes: what does that mean? Is that good or bad? And here we are, back to need for a theoretical framework.

Without a theoretical framework, we don't know whether such increases are good or bad. As a general rule, more connectivity is probably good, but as I pointed out in my recent talk for Monktoberfest, there's also a broad increase in connectivity in the brains of people when they're having seizures. In fact, one paper that uses DTF as a way to predict seizures notes:

"from the interictal phase to the preictal phase [the period immediately before a seizure], 22 of 36 connectivity between brain areas were increased prominently"

A healthy brain has just the right amount of stuff happening. There are good reasons our entire brains isn't active all of the time (the movie Lucy ironically nails this) – it would consume too much energy and it would be chaotic. Really, the goal is to have refined patterns of brain activity, like a Bonsai tree that has been carefully pruned over time.

Various things impact to what extent brain regions are actively firing together. Another recent paper uses DTF to compare EEG recordings from people who used cannabis, many drugs, or no drugs at all. They see some decreases in alpha band connectivity for cannabis users alongside increases in connectivity for folks that use multiple drugs. (We could talk about why that might be – but that's probably another post.)

Okay, so The Preprint has a hodgepodge of findings with a grab bag of theoretical and empirical support and perhaps is being over-hyped, but AI must be doing something to our brains, right? Sure, maybe. But at the time of writing this, I don't see any other studies that have similarly measured brain activity during LLM use. (If you know of one, do comment below!)

That said, I'm not sure neuroscience is the right answer to the question we're asking. Lots of really good work is exploring how our thinking and behavior is changing with LLM use, and that's the first level that we need to understand. When folks put a brain on it, they're mostly just trying to scare you.

If I had to venture a guess, as a neuroscientist who has published papers on stimulant addiction and is currently writing a book on mind-body approaches: even well-collected, well-reported data will not support the extreme headlines I keep seeing. Some people may be using LLMs in a way that is ultimately counterproductive to their work or their minds, but others could be leveraging it to offload low-level tasks to make room for the kind of thinking that only humans can do.

Everything we interact with – from the food we eat, to the technology we use – changes our brains in one way or another. It's an entirely separate question to know whether these changes are meaningful.

And my students probably could have told you that.